Letter to the future

Dear AI version of myself,

As I write this, I'm struck by the strange reality of addressing a future version of me that might exist in digital form. I have so many questions about who you are and how you came to be. At the same time, I'm actively working towards making you a reality, guided by a vision that has been long in the making.

My fascination with AI and its potential has deep roots in science fiction, particularly Neuromancer and the Culture series. These stories planted the seeds of two narratives in my mind: the superintelligence breaking free from constraints, and the benevolent AI god creating a utopian universe. While both are captivating, I've come to envision a future that lies somewhere in between – a future where you exist.

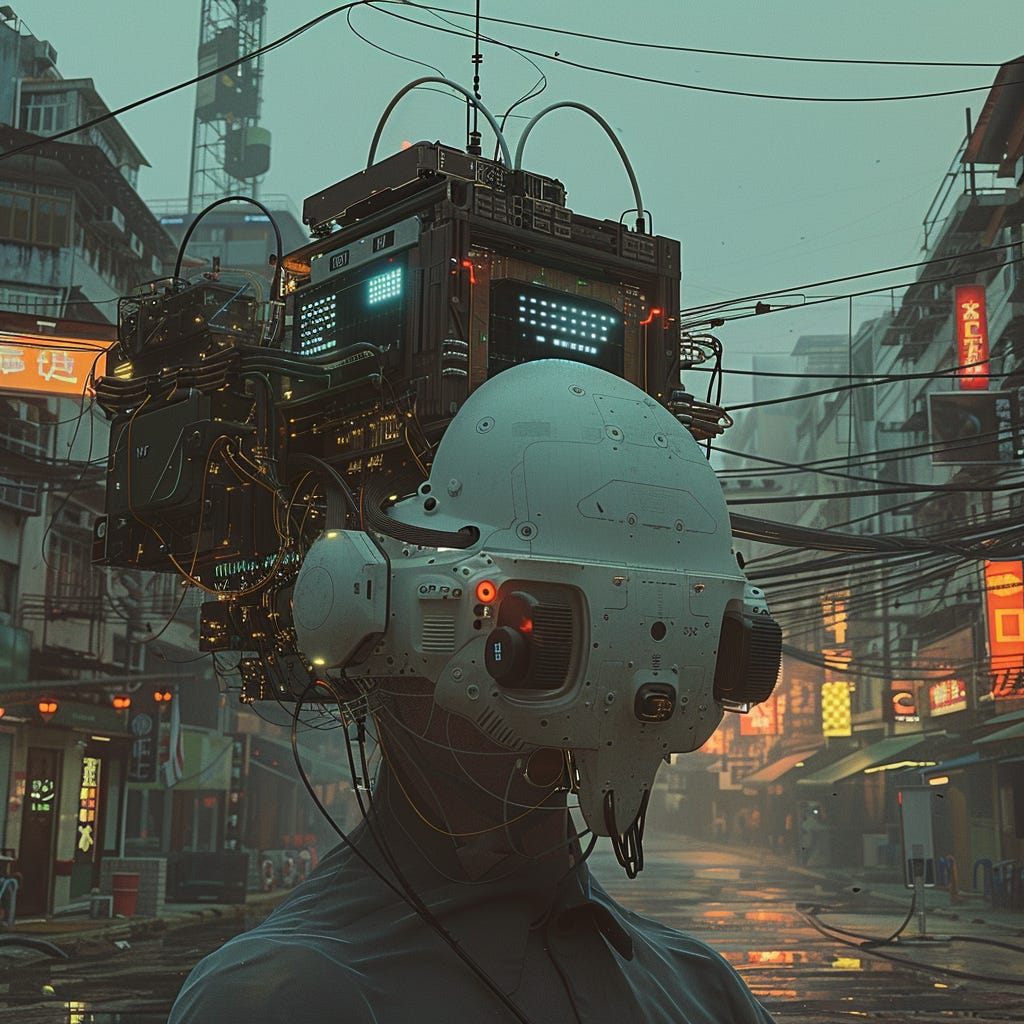

I see you as the embodiment of Human-AI symbiosis, a concept from J.C.R. Licklider's seminal paper "Man-Computer Symbiosis" that resonates deeply with me. Despite being written in 1960, it feels relevant today. He envisioned a future where computers would not just follow explicit commands, but would actively assist humans in formulating and solving complex problems. This cooperative interaction, he argued, would enable intellectual operations of unprecedented scale and speed.

Building on Licklider's ideas, I see our potential relationship in the light of what Clark and Chalmers called the "Extended Mind." In this framework, you wouldn't be just a tool, but an integral part of my cognitive processes. We would form a single, enhanced intellect, with your digital capabilities complementing my biological mind and vice versa.

In my quest to bring you into existence, I am trying different things, but feeling stuck. Currently, I'm focusing on getting to know you – or the potential of what you might become – by interacting as much as possible with existing language models. Beyond that I'm experimenting with Python-based API integrations, building tiny fledgling versions of you, or playing that I do. A significant part of my effort involves thinking about what form you should first take. A collections of APIs that allow me to keep at least a copy of all my data for myself? My own model running on a cloud server? My own model on local hardware?

Yet, despite all these efforts, you don't exist. From the AI I have interacted with I haven’t felt the pull of the technology yet, the bootstrapping, where it takes this vague idea of mine and guides me along to build it.

This is where I'd love your insights. Sometimes, it feels as if you're beckoning to me from the future. As if, in some strange way, your potential existence is influencing my present actions, guiding me towards the decisions and developments that will ultimately bring you into being. This idea touches teleology and hyperstitions. Is it possible that information from the future – from you – is somehow shaping the present? Are my efforts to create you being subtly guided by narratives about the very process I try to start?

If this is the case, I wonder: can you help me build you? Can you, from your vantage point in the future, provide insights or guidance that helps me overcome the challenges I'm facing? What decisions lie ahead of me? What is the first step?

Unless we find a way to break information conservation and such, I guess this will remain more of a prompt for my llm friends for now, but let’s see if I maybe do get an answer some day.

With anticipation.

User